A Gentle Intro to TensorFlow for Theano Users¶

Welcome to the Lenet tutorial using TensorFlow.

From being a long time user of Theano, migrating to TensorFlow is not that easy.

Recently, tensorflow is showing strong performance leading to many defecting from theano to tensorflow.

I am one such defector.

This repository contains an implementation Lenet, the hello world of deep CNNs and is my first exploratory experimentation with TensorFlow.

It is a typical Lenet-5 network trained to classify MNIST dataset.

This is a simple implementation similar to that, which can be found in the tutorial that comes with TensorFlow and most other public service tutorials.

This is however modularized, so that it is easy to understand and reuse.

This documentation website that comes along with this repository might help users migrating from theano to tensorflow, just as I did while

implementing this repository.

In this regard, whenever possible, I make explicit comparisons to help along.

Tensorflow has many contrib packages that are a level of abstraction higher than theano.

I have avoided using those whenever possible and stuck with the fundamental tensorflow modules for this tutorial.

While this is most useful for theano to tensorflow migrants, this will also be useful for those who are new to CNNs. There are small notes and materials explaining the theory and math behind the working of CNNs and layers. While these are in no way comprehensive, these might help those that are unfamiliar with CNNs but want to simply learn tensorflow and would rather not spend time on a semester long course.

Note

The theoretical material in this tutorial are adapted from a forthcoming book chapter on Feature Learning for Images

To begin with, it might be helpful to run the entire code in its default setting. This will enable you to ensure that the installations were proper and that your machine was setup.

Obviously, you’d need tensorflow and numpy installed.

There might be other tools that you’d require for advanced uses which you can find in the requirements.txt file that ships along with this code.

Firstly, clone the repository down into some directory as follows,

git clone http://github.com/ragavvenkatesan/tf-lenet

cd tf-lenet

You can then run the entire code in one of two ways.

Either run the main.py file like so:

python main.py

or type in the contents of that file, line-by-line in a python shell:

from lenet.trainer import trainer

from lenet.network import lenet5

from lenet.dataset import mnist

dataset = mnist()

net = lenet5(images = dataset.images)

net.cook(labels = dataset.labels)

bp = trainer (net, dataset.feed)

bp.train()

Once the code is running, setup tensorboard to observe results and outputs.

tensorboard --logdir=tensorboard

If everything went well, the tensorboard should have content populated in it.

Open a browser and enter the address 0.0.0.0:6006, this will open up tensorboard.

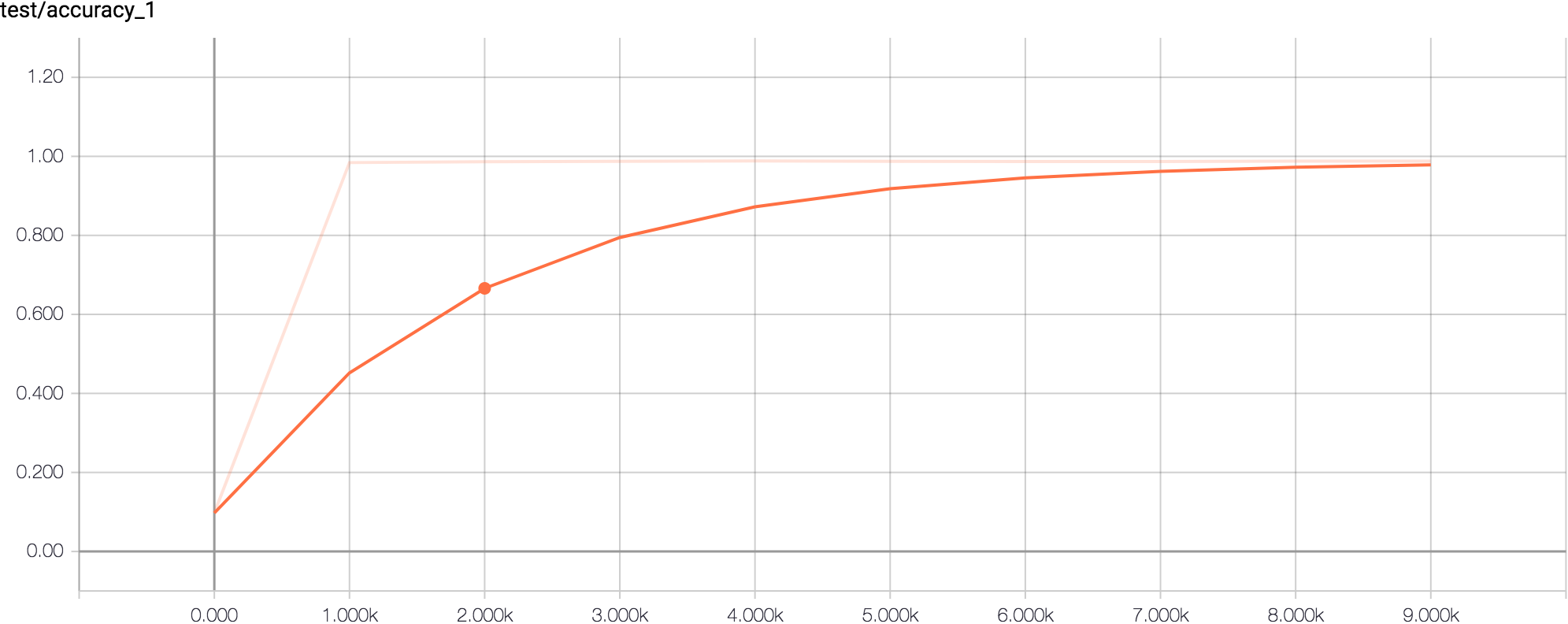

The accuracy graph in the scalars tab under the test column will look like the following:

This implies that the network trained fully and has achieved about 99% accuracy and everything is normal. From the next section onwards, I will go in detail, how I built this network.

If you are interested please check out my Yann Toolbox written in theano completely. Have fun!